Fix, Recursive, Vector, and Paragraph.

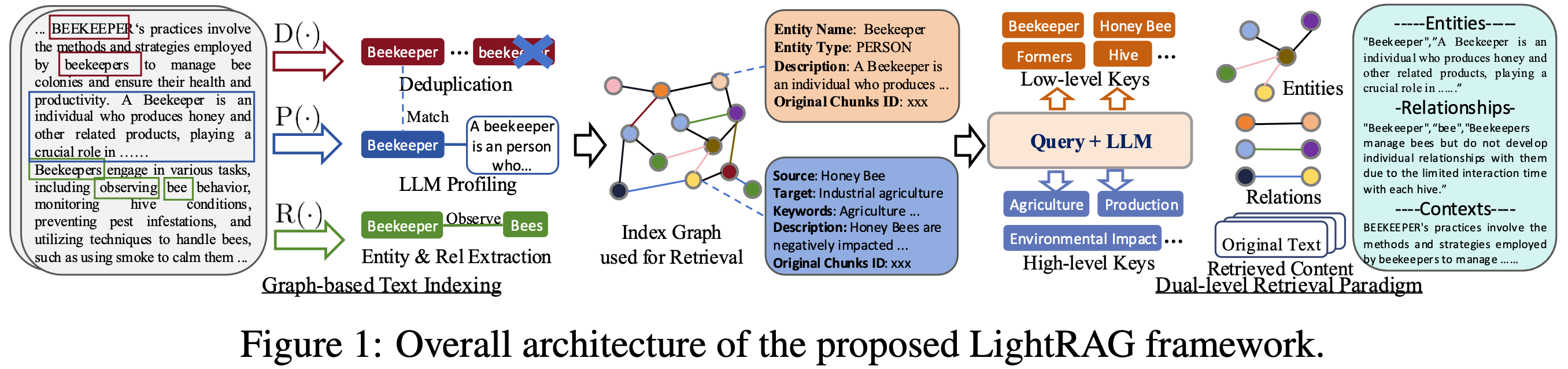

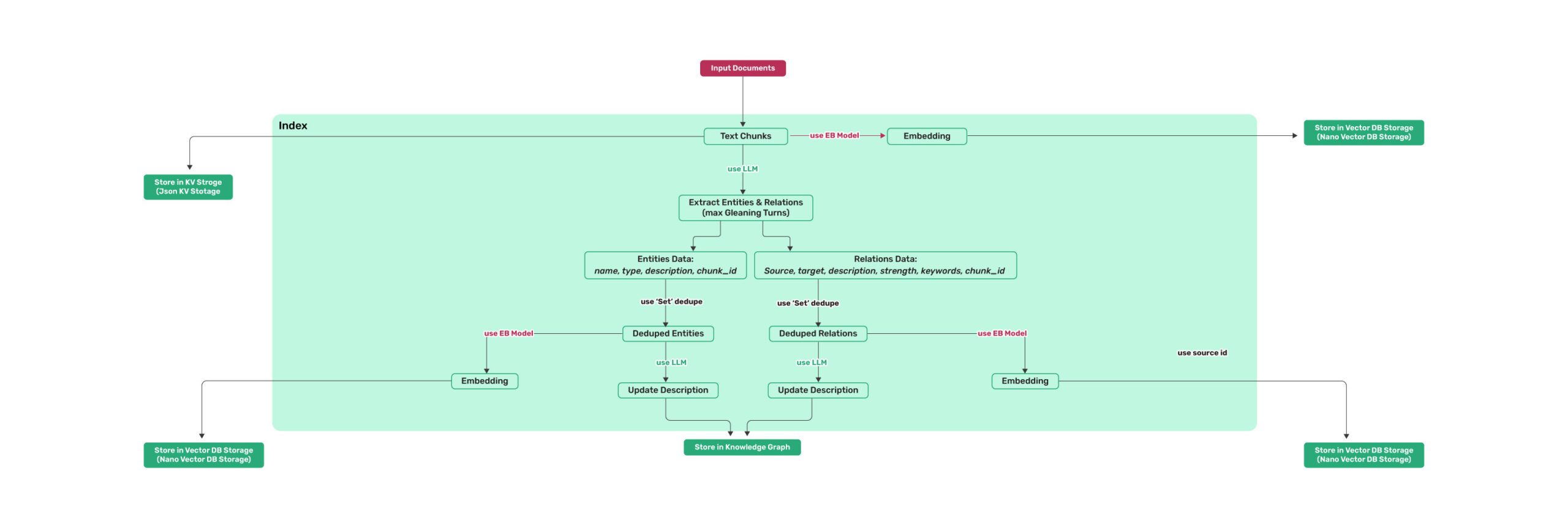

Figure 1: LightRAG Indexing Flowchart - Img Caption : Source

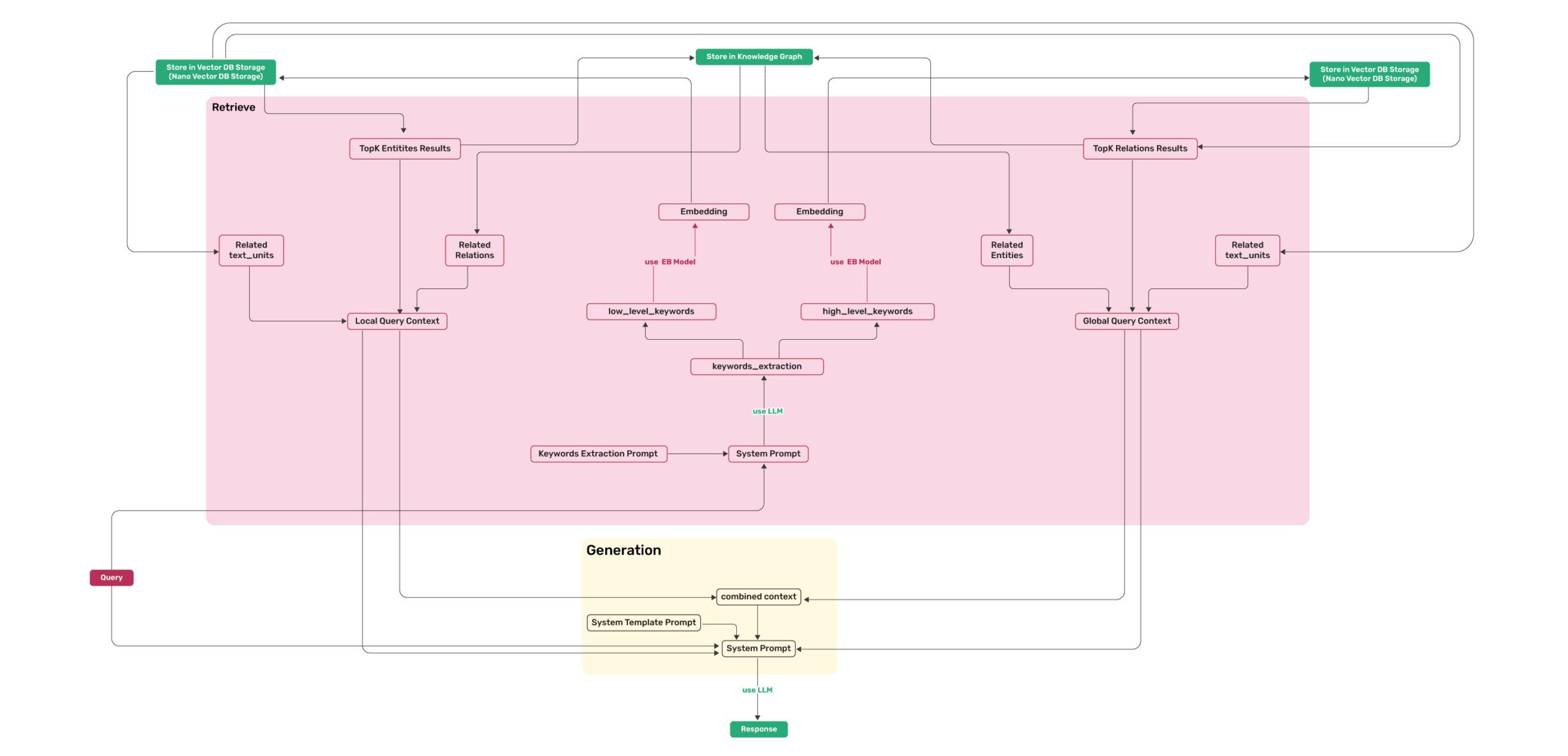

Figure 2: LightRAG Retrieval and Querying Flowchart - Img Caption : Source

💡 Using uv for Package Management: This project uses uv for fast and reliable Python package management. Install uv first: curl -LsSf https://astral.sh/uv/install.sh | sh (Unix/macOS) or powershell -c "irm https://astral.sh/uv/install.ps1 | iex" (Windows)

Note: You can also use pip if you prefer, but uv is recommended for better performance and more reliable dependency management.

📦 Offline Deployment: For offline or air-gapped environments, see the Offline Deployment Guide for instructions on pre-installing all dependencies and cache files.

The LightRAG Server is designed to provide Web UI and API support. The Web UI facilitates document indexing, knowledge graph exploration, and a simple RAG query interface. LightRAG Server also provide an Ollama compatible interfaces, aiming to emulate LightRAG as an Ollama chat model. This allows AI chat bot, such as Open WebUI, to access LightRAG easily.

### Install LightRAG Server as tool using uv (recommended)

uv tool install "lightrag-hku[api]"

### Or using pip

# python -m venv .venv

# source .venv/bin/activate # Windows: .venv\Scripts\activate

# pip install "lightrag-hku[api]"

### Build front-end artifacts

cd lightrag_webui

bun install --frozen-lockfile

bun run build

cd ..

# Setup env file

# Obtain the env.example file by downloading it from the GitHub repository root

# or by copying it from a local source checkout.

cp env.example .env # Update the .env with your LLM and embedding configurations

# Launch the server

lightrag-server

git clone https://github.com/HKUDS/LightRAG.git

cd LightRAG

# Bootstrap the development environment (recommended)

make dev

source .venv/bin/activate # Activate the virtual environment (Linux/macOS)

# Or on Windows: .venv\Scripts\activate

# make dev installs the test toolchain plus the full offline stack

# (API, storage backends, and provider integrations), then builds the frontend.

# Run make env-base or copy env.example to .env before starting the server.

# Equivalent manual steps with uv

# Note: uv sync automatically creates a virtual environment in .venv/

uv sync --extra test --extra offline

source .venv/bin/activate # Activate the virtual environment (Linux/macOS)

# Or on Windows: .venv\Scripts\activate

### Or using pip with virtual environment

# python -m venv .venv

# source .venv/bin/activate # Windows: .venv\Scripts\activate

# pip install -e ".[test,offline]"

# Build front-end artifacts

cd lightrag_webui

bun install --frozen-lockfile

bun run build

cd ..

# setup env file

make env-base # Or: cp env.example .env and update it manually

# Launch API-WebUI server

lightrag-server

git clone https://github.com/HKUDS/LightRAG.git

cd LightRAG

cp env.example .env # Update the .env with your LLM and embedding configurations

# modify LLM and Embedding settings in .env

docker compose up

Historical versions of LightRAG docker images can be found here: LightRAG Docker Images

Official GHCR images published by GitHub Actions are signed with Sigstore Cosign using GitHub OIDC. See docs/DockerDeployment.md for verification commands.

Instead of editing env.example by hand, use the interactive setup wizard to generate a configured .env and, when needed, docker-compose.final.yml:

make env-base # Required first step: LLM, embedding, reranker

make env-storage # Optional: storage backends and database services

make env-server # Optional: server port, auth, and SSL

make env-base-rewrite # Optional: force-regenerate wizard-managed compose services

make env-storage-rewrite # Optional: force-regenerate wizard-managed compose services

make env-security-check # Optional: audit the current .env for security risks

For full description of every target see docs/InteractiveSetup.md.

The setup wizards update configuration only; run make env-security-check separately to audit the

current .env for security risks before deployment.

By default, rerunning the setup preserves unchanged wizard-managed compose service blocks; use a*-rewrite target only when you need to rebuild those managed blocks from the bundled templates.

cd LightRAG

# Note: uv sync automatically creates a virtual environment in .venv/

uv sync

source .venv/bin/activate # Activate the virtual environment (Linux/macOS)

# Or on Windows: .venv\Scripts\activate

# Or: pip install -e .

uv pip install lightrag-hku

# Or: pip install lightrag-hku

LightRAG's demands on the capabilities of Large Language Models (LLMs) are significantly higher than those of traditional RAG, as it requires the LLM to perform entity-relationship extraction tasks from documents. Configuring appropriate Embedding and Reranker models is also crucial for improving query performance.

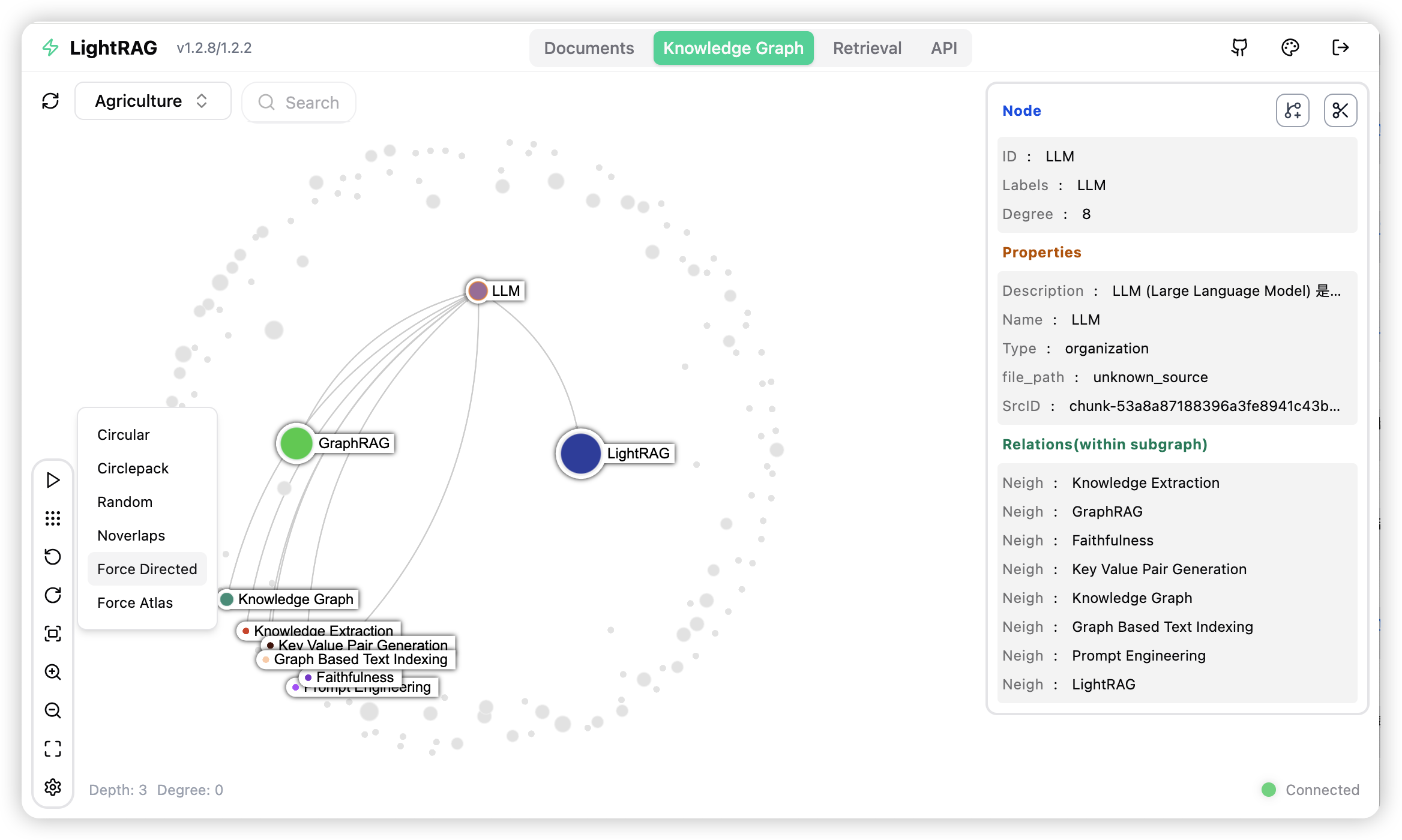

BAAI/bge-m3 and text-embedding-3-large.BAAI/bge-reranker-v2-m3 or models provided by services like Jina.The LightRAG Server is designed to provide Web UI and API support. The LightRAG Server offers a comprehensive knowledge graph visualization feature. It supports various gravity layouts, node queries, subgraph filtering, and more. For more information about LightRAG Server, please refer to LightRAG Server.

To get started with LightRAG core, refer to the sample codes available in the examples folder. Additionally, a video demo demonstration is provided to guide you through the local setup process. If you already possess an OpenAI API key, you can run the demo right away:

### you should run the demo code with project folder

cd LightRAG

### provide your API-KEY for OpenAI

export OPENAI_API_KEY="sk-...your_opeai_key..."

### download the demo document of "A Christmas Carol" by Charles Dickens

curl https://raw.githubusercontent.com/gusye1234/nano-graphrag/main/tests/mock_data.txt > ./book.txt

### run the demo code

python examples/lightrag_openai_demo.py

For a streaming response implementation example, please see examples/lightrag_openai_compatible_demo.py. Prior to execution, ensure you modify the sample code's LLM and embedding configurations accordingly.

Note 1: When running the demo program, please be aware that different test scripts may use different embedding models. If you switch to a different embedding model, you must clear the data directory (./dickens); otherwise, the program may encounter errors. If you wish to retain the LLM cache, you can preserve the kv_store_llm_response_cache.json file while clearing the data directory.

Note 2: Only lightrag_openai_demo.py and lightrag_openai_compatible_demo.py are officially supported sample codes. Other sample files are community contributions that haven't undergone full testing and optimization.

For the complete Core API reference — including init parameters, QueryParam, LLM/embedding provider examples (OpenAI, Ollama, Azure, Gemini, HuggingFace, LlamaIndex), reranker injection, insert operations, entity/relation management, and delete/merge — see docs/ProgramingWithCore.md.

⚠️ If you would like to integrate LightRAG into your project, we recommend utilizing the REST API provided by the LightRAG Server. LightRAG Core is typically intended for embedded applications or for researchers who wish to conduct studies and evaluations.

LightRAG provides additional capabilities including token usage tracking, knowledge graph data export, LLM cache management, Langfuse observability integration, and RAGAS-based evaluation. See docs/AdvancedFeatures.md.

LightRAG Server includes a multimodal document pipeline for PDFs, Office documents, images, tables, and formulas. Parsing is handled through external MinerU or Docling services, while multimodal indexing runs in the LightRAG pipeline. For setup details, see docs/AdvancedFeatures.md.

LightRAG consistently outperforms NaiveRAG, RQ-RAG, HyDE, and GraphRAG across agriculture, computer science, legal, and mixed domains. For the full evaluation methodology, prompts, and reproduce steps, see docs/Reproduce.md.

Overall Performance Table

| Agriculture | CS | Legal | Mix | |||||

|---|---|---|---|---|---|---|---|---|

| NaiveRAG | LightRAG | NaiveRAG | LightRAG | NaiveRAG | LightRAG | NaiveRAG | LightRAG | |

| Comprehensiveness | 32.4% | 67.6% | 38.4% | 61.6% | 16.4% | 83.6% | 38.8% | 61.2% |

| Diversity | 23.6% | 76.4% | 38.0% | 62.0% | 13.6% | 86.4% | 32.4% | 67.6% |

| Empowerment | 32.4% | 67.6% | 38.8% | 61.2% | 16.4% | 83.6% | 42.8% | 57.2% |

| Overall | 32.4% | 67.6% | 38.8% | 61.2% | 15.2% | 84.8% | 40.0% | 60.0% |

| RQ-RAG | LightRAG | RQ-RAG | LightRAG | RQ-RAG | LightRAG | RQ-RAG | LightRAG | |

| Comprehensiveness | 31.6% | 68.4% | 38.8% | 61.2% | 15.2% | 84.8% | 39.2% | 60.8% |

| Diversity | 29.2% | 70.8% | 39.2% | 60.8% | 11.6% | 88.4% | 30.8% | 69.2% |

| Empowerment | 31.6% | 68.4% | 36.4% | 63.6% | 15.2% | 84.8% | 42.4% | 57.6% |

| Overall | 32.4% | 67.6% | 38.0% | 62.0% | 14.4% | 85.6% | 40.0% | 60.0% |

| HyDE | LightRAG | HyDE | LightRAG | HyDE | LightRAG | HyDE | LightRAG | |

| Comprehensiveness | 26.0% | 74.0% | 41.6% | 58.4% | 26.8% | 73.2% | 40.4% | 59.6% |

| Diversity | 24.0% | 76.0% | 38.8% | 61.2% | 20.0% | 80.0% | 32.4% | 67.6% |

| Empowerment | 25.2% | 74.8% | 40.8% | 59.2% | 26.0% | 74.0% | 46.0% | 54.0% |

| Overall | 24.8% | 75.2% | 41.6% | 58.4% | 26.4% | 73.6% | 42.4% | 57.6% |

| GraphRAG | LightRAG | GraphRAG | LightRAG | GraphRAG | LightRAG | GraphRAG | LightRAG | |

| Comprehensiveness | 45.6% | 54.4% | 48.4% | 51.6% | 48.4% | 51.6% | 50.4% | 49.6% |

| Diversity | 22.8% | 77.2% | 40.8% | 59.2% | 26.4% | 73.6% | 36.0% | 64.0% |

| Empowerment | 41.2% | 58.8% | 45.2% | 54.8% | 43.6% | 56.4% | 50.8% | 49.2% |

| Overall | 45.2% | 54.8% | 48.0% | 52.0% | 47.2% | 52.8% | 50.4% | 49.6% |

Ecosystem & Extensions

|

📸

RAG-AnythingMultimodal RAG |

🎥

VideoRAGExtreme Long-Context Video RAG |

✨

MiniRAGExtremely Simple RAG |

@article{guo2024lightrag,

title={LightRAG: Simple and Fast Retrieval-Augmented Generation},

author={Zirui Guo and Lianghao Xia and Yanhua Yu and Tu Ao and Chao Huang},

year={2024},

eprint={2410.05779},

archivePrefix={arXiv},

primaryClass={cs.IR}

}